Could AI Replace An Entire Marketing Team?

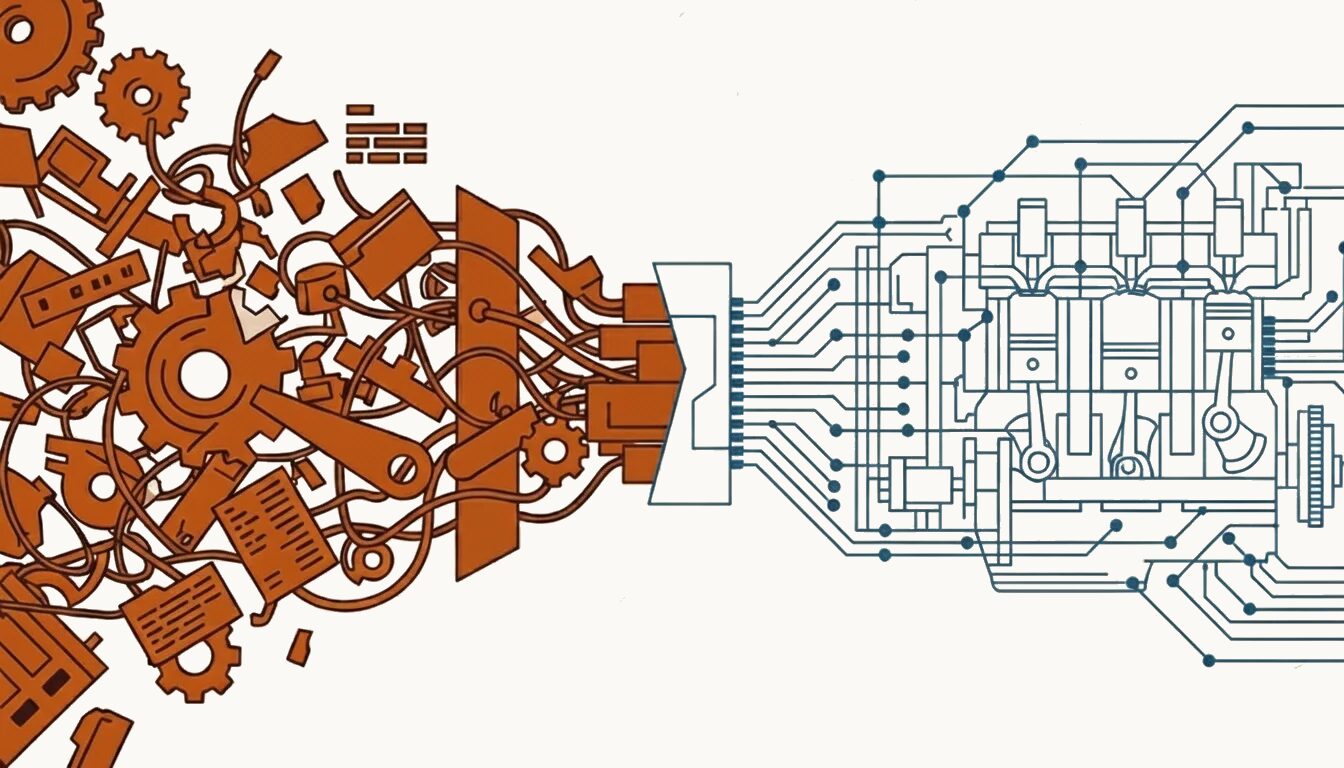

In my previous article, The AI Marketing Strategy Gap, I explored the “pile of parts” problem: the disconnect between AI adoption and strategic integration.

But it left a deeper question unanswered: Could AI replace the entire marketing function? Not augment. Not assist. Replace.

This is the first in a series exploring that question. Not to prove AI can replace marketers, but to understand the conditions under which it could, and what that means for how we build marketing teams today.

What’s Covered

- The Scale of the Problem

- The Wrong Questions

- GPT-3 and the “Black Box” Gap

- But AI Can Reason Systematically

- From Technique to Agentic

- Breaking Down Marketing Work: Atomic vs. Composite

- From Parts to Engines: The Operator Function

- AI Maturity Model for Marketing

- What This Means for Marketing Leaders

The Scale of the Problem

88% of organisations now use AI in at least one business function, yet most remain stuck in experimentation. The gap between adoption and results is widening, not closing.

The numbers from McKinsey’s State of AI 2025 report are stark. The 2025 Gartner Marketing Technology Survey paints a similar picture: 81% of martech leaders are piloting or fully implementing AI agents, yet utilisation of their overall martech stack sits at just 49%. That’s an improvement from 33% in 2023, but still means half of marketing technology capabilities go unused.

Meanwhile, the 2025 Marketing Technology Landscape now catalogues 15,384 solutions, up 9% from the previous year, and 100x growth since 2011. Of the new tools added this year, 77% were AI-native.

More tools. More AI. Same fundamental problem.

CMOs Are Paying the Price

The 2025 Gartner CMO Spend Survey reveals that 59% of CMOs report insufficient budget to execute their strategy. Marketing budgets remain flat at 7.7% of company revenue. CMOs are expected to do more with less, and 65% believe AI will dramatically transform their role within the next two years.

Yet while budgets stagnate and expectations rise, waste accelerates:

- SaaS Bloat: The average enterprise manages 275 SaaS applications (Zylo 2025 SaaS Management Index), yet uses only 47% of the licenses purchased.

- Rising Costs: SaaS spend per employee has risen to $4,830, up 21.9% year-over-year, driven by unexpected consumption-based AI pricing models.

- Projected Waste: Organisations without centralised visibility will overspend by at least 25% by 2027 due to redundancy (Gartner Magic Quadrant for SMPs).

Marketing is both a contributor to and a victim of this waste. Bleeding budget into tools that don’t connect. Paying for potential rather than performance.

More AI won’t fix this. This isn’t a technology problem. It’s an architecture problem.

The Wrong Questions

Most AI marketing conversations start with the wrong questions: “Which AI tools should I use?” and “How do I automate this task?” This leads to what I’ve seen repeatedly: marketing teams amassing AI tools like puzzle pieces, hoping the picture will eventually emerge. It rarely does.

A better question: How do I turn disconnected tools into a connected marketing system that performs autonomously?

This reframe changes everything. It shifts focus from tools to architecture. From features to outcomes. From prompts to workflows.

But to build a system, you need to understand how the components think. And for a long time, that was impossible.

GPT-3 and the “Black Box” Gap

In the early GPT-3 era (2020), we couldn’t see how AI reasoned. When you prompted an LLM to “write SEO-optimised content for our product launch,” the process was opaque. You saw input and output. The logic in between remained hidden inside a “black box.”

Contrast this with how an experienced content marketer works:

- Audience: Who is this for? What stage of the journey are they in?

- Landscape: What are competitors saying? Where’s the white space?

- Strategy: What’s our unique angle? What proof points support it?

- Success: What does success look like? Traffic? Conversions? Brand lift?

They run through this mental checklist, consciously or not, before executing. LLMs didn’t do that then. I called it the Black Box Gap. And I needed to close it.

But AI Can Reason Systematically

In 2022, researchers at Google published a landmark study on Chain-of-Thought (CoT) prompting. When LLMs are given step-by-step reasoning examples, their performance on complex tasks improves dramatically: from 17.9% to 58.1% on a mathematical reasoning benchmark.

A follow-up study by the University of Tokyo and Google found that simply adding “Let’s think step by step” before a problem, with no examples at all, triggered similar reasoning capabilities. On one benchmark, accuracy jumped from 17.7% to 78.7%. A 4x improvement from five words.

This Zero-shot Chain-of-Thought research revealed something profound: LLMs contain latent reasoning capabilities that emerge when explicitly activated. The models weren’t just pattern-matching. They could decompose problems the way experienced practitioners do when properly prompted.

The implication for marketing: AI doesn’t just generate content. It can reason through problems (audience analysis, competitive positioning, content strategy) when given the right structure.

From Technique to Agentic

The Chain-of-Thought discovery was so significant that this capability is now built directly into modern AI models. What started as a prompting technique has become native architecture.

OpenAI’s reasoning models (o1, o3, o4-mini) perform step-by-step thinking automatically, without explicit prompting. As Microsoft documentation notes, models like the o1-series have built-in chain-of-thought reasoning, meaning they “internally reason through steps without needing explicit coaxing.”

The Wharton Prompting Science Report (June 2025) confirmed this evolution: “For models with built-in reasoning capabilities, CoT prompting produced minimal benefits… Many models perform CoT-like reasoning by default, even without explicit instructions.”

| Era | Approach | What It Means |

|---|---|---|

| 2020 to 2021 | Standard Prompting | Output only; reasoning process hidden (“black box”) |

| 2022 to 2023 | CoT Prompting | Users activate reasoning with “let’s think step by step” |

| 2024 to 2025 | Built-in Reasoning | Models trained with internal chain-of-thought; reasoning happens automatically |

| 2025+ | Agentic AI | Autonomous agents that reason, decide, and act across workflows |

The evolution from CoT prompting to built-in reasoning has culminated in what the industry now calls “Agentic AI.” These are autonomous systems that don’t just respond to prompts. They make decisions, trigger actions, and learn across cycles.

BCG’s recent research describes the shift: “Past innovations, from CRM to marketing automation, helped streamline discrete steps. Agentic AI goes further. These systems introduce autonomy: they make decisions, trigger actions, and learn across cycles.”

McKinsey’s analysis is direct: “Success calls for designing processes around agents, not bolting agents onto legacy processes.”

Sound familiar? This is the “pile of parts” problem at a new scale.

Breaking Down Marketing Work: Atomic vs. Composite

If AI can reason and act autonomously, how do we apply that ability in marketing? I started by borrowing the concept of Jobs To Be Done (JTBD) from product thinking.

JTBD is typically used to understand why customers “hire” products to solve problems. But I saw a parallel: What if we applied the same lens to marketing work itself?

In my synthesis, every marketing activity can be decomposed into discrete jobs with clear inputs, outputs, and success criteria. This led me to identify two types:

Atomic JTBDs

Single, well-defined tasks with clear parameters:

- Analyse competitor pricing pages

- Generate 10 headline variants for A/B testing

- Score leads based on engagement signals

- Extract key themes from customer reviews

Composite JTBDs

Complex workflows requiring multiple atomic jobs in sequence:

- Develop Q1 content strategy (requires: audience analysis → competitive review → theme identification → content calendar → brief creation)

- Launch product campaign (requires: positioning → messaging → creative development → channel planning → execution → optimisation)

The insight: AI excels at atomic jobs today. Composite jobs require orchestration: the systematic integration of atomic jobs into coherent workflows.

Example: Content Creation as Composite JTBD

A “create blog post” request seems simple. But decomposed:

| Step | Atomic JTBD | Input | Output |

|---|---|---|---|

| 1 | Analyse target audience | ICP data, search behaviour | Audience insights |

| 2 | Research topic landscape | Keywords, competitor content | Content gaps |

| 3 | Define content angle | Insights + gaps | Strategic brief |

| 4 | Generate draft | Brief + brand voice | Draft content |

| 5 | Optimise for SEO | Draft + keywords | Optimised content |

| 6 | Review and refine | Content + guidelines | Final content |

Example: Competitive Response as Composite JTBD

A competitor launches a new feature. Your response seems reactive. But decomposed:

| Step | Atomic JTBD | Input | Output |

|---|---|---|---|

| 1 | Detect competitive signal | News feeds, social monitoring | Alert trigger |

| 2 | Analyse competitor positioning | Landing page, messaging, pricing | Competitive brief |

| 3 | Assess strategic implications | Brief + product roadmap | Response recommendation |

| 4 | Draft counter-positioning | Recommendation + brand voice | Messaging options |

| 5 | Select channels and assets | Messaging + audience data | Distribution plan |

| 6 | Execute and monitor | Plan + performance baseline | Live response + metrics |

What appears to be instinct is, in fact, a workflow. The experienced marketer runs this loop unconsciously. AI makes it explicit and repeatable.

Example: Lead Nurturing as Composite JTBD

A new lead enters your funnel. The nurture sequence seems automated. But decomposed:

| Step | Atomic JTBD | Input | Output |

|---|---|---|---|

| 1 | Score incoming lead | Form data, firmographics | Lead score |

| 2 | Segment by intent | Score + behaviour signals | Nurture track assignment |

| 3 | Select content sequence | Segment + content library | Personalised journey |

| 4 | Generate personalised touchpoints | Journey + CRM data | Email/ad variants |

| 5 | Monitor engagement | Opens, clicks, responses | Engagement score |

| 6 | Trigger handoff or re-engage | Score threshold | SQL or re-nurture |

Each step is an atomic JTBD that AI can execute today. Most marketing automation handles fragments: steps 3 to 5 of lead nurturing, for example. The gap is orchestration.

From Parts to Engines: The Operator Function

Having atomic or composite JTBDs is like having engine parts. But parts don’t make an engine. You need the architecture and someone to design and run it.

I call this the Operator function: the strategic orchestration that connects atomic jobs into coherent workflows.

Think of the martech landscape’s 15,384 solutions as components. Each does something useful in isolation. But without a unifying architecture:

- Data doesn’t flow between systems

- Insights don’t inform decisions

- Optimisations don’t compound

- Strategy remains disconnected from execution

This is why “pile of parts” is the defining problem of AI marketing today.

The Evolution of Connection

For years, we solved this with “digital duct tape”: linear automation tools like Zapier to glue APIs together. Today, we have far more powerful options:

- Standard Protocols: The Model Context Protocol (MCP) acts as a universal “socket,” allowing AI to plug into data sources without custom code.

- Advanced Orchestrators: Platforms like n8n enable complex, agent-based workflows with loops and memory.

But having powerful tools creates a new trap: The Orchestration Illusion.

Just because you can build a complex autonomous workflow in n8n doesn’t mean you should.

- Privacy Risk: If you pipe customer data into an agent without privacy guardrails, you are automating compliance risk.

- Brand Risk: If you connect a content generator to a social publisher without a “Brand Voice” filter, you are automating brand damage.

- Cost Risk: “Connected” means “consumption.” Continuous agent loops across multiple SaaS subscriptions drive massive API and compute costs. Inefficient orchestration creates “token bloat”: paying for AI to read the same data thousands of times.

Connectivity is not strategy.

The technology solves the plumbing: how agents talk to each other. The Operator solves the design: what agents are allowed to say or do, and why.

AI Maturity Model for Marketing

How does an Operator know what level of orchestration each job requires? A simple atomic task needs different handling than a composite workflow with decision points. I needed a framework to classify jobs by autonomy level.

SAE International’s L0-L5 framework for autonomous vehicles has become the standard for discussing machine autonomy. Recently, researchers at the University of Washington adapted this thinking for AI agents, proposing five levels based on the user’s role: operator, collaborator, consultant, approver, and observer.

Drawing from both frameworks, I propose a maturity model specifically for AI marketing systems:

| Level | Name | Description | Example | Feasibility |

|---|---|---|---|---|

| L1 | Prompt Assistant | Single prompts, human reviews all output | “Write 5 email subject lines” | Widely available |

| L2 | Workflow Automation | Chained prompts with conditional logic | Brief → draft → SEO check → schedule | Available with setup |

| L3 | Supervised Autonomy | AI executes workflows, human approves decisions | AI drafts campaign; marketer approves before publishing | Emerging |

| L4 | Guided Autonomy | AI proposes and executes within guardrails | AI adjusts ad spend within budget limits | Early adoption |

| L5 | Goal-Based Orchestration | AI determines strategy from objectives | “Increase MQLs 20%” → AI selects channels, content, timing | Frontier |

According to McKinsey’s State of AI 2025 report, 23% of organisations are scaling agentic AI systems in at least one business function, with an additional 39% experimenting. But the use of agents is not yet widespread: most scaling efforts are occurring in only one or two functions.

We’re primarily operating at L1 to L3 today.

What This Means for Marketing Leaders

The question “Could AI replace my entire marketing team?” has a nuanced answer: Not with tools alone. But with the right architecture, led by a skilled Operator, AI can potentially replace the execution layer of a marketing team.

The current state (88% adoption, 49% utilisation, 15,384 solutions) reflects what happens when you accumulate without architecting.

The 2025 Gartner CMO Spend Survey found that GenAI investments are delivering ROI through:

- 49% improved time efficiency

- 40% improved cost efficiency

- 27% increased capacity to produce content

But these are efficiency gains, not transformation gains. The transformation comes from the architectural and orchestration expertise of an experienced marketing operator.

What’s Next

This is Part 1 in a series exploring whether AI can replace the marketing function, and what it takes to build systems that work.

In Part 2: The AI Marketing Framework, I share the architecture thesis that emerged from this exploration:

- Three layers that every AI marketing system needs

- 11 engines that cover the complete marketing function

- The autonomy progression from L1 to L5

- The business case that makes this viable

This isn’t just theory. I’m building parts of this framework at growthsetting.com, together with Maciej Wisniewski, to test whether the architecture works.

Key Concepts

| Term | Definition |

|---|---|

| Pile of Parts Problem | The disconnect between AI/martech adoption and strategic integration. Accumulating tools without building a system. |

| Atomic JTBD | Single, well-defined marketing tasks with clear inputs, outputs, and success criteria. |

| Composite JTBD | Complex marketing workflows requiring multiple atomic jobs executed in sequence. |

| Operator Function | The strategic orchestration that connects atomic jobs into coherent workflows. The architecture role AI cannot replace. |

| Orchestration Illusion | The false assumption that connecting tools creates a system. Connectivity enables data flow; architecture determines whether that flow is intelligent. |

| L1 to L5 Maturity Model | A framework for AI marketing system autonomy, ranging from Prompt Assistant (L1) to Goal-Based Orchestration (L5). |

| Agentic AI | Autonomous AI systems that reason, make decisions, trigger actions, and learn across cycles without requiring explicit prompts for each step. |

FAQ

Could AI replace an entire marketing team?

Not with tools alone. But with the right architecture, led by a skilled Operator, AI can potentially replace the execution layer of a marketing team. The transformation requires moving from accumulating tools to building connected systems with clear workflows.

What is the pile of parts problem in AI marketing?

The pile of parts problem describes the disconnect between AI/martech adoption and strategic integration. It’s like having world-class car parts without an engine block. Tools exist, but no architecture connects them to measurable marketing outcomes.

What is the difference between atomic and composite JTBDs?

Atomic JTBDs are single, well-defined tasks with clear inputs and outputs, like generating headline variants or scoring leads. Composite JTBDs are complex workflows requiring multiple atomic jobs in sequence, like developing a content strategy or launching a product campaign.

What is the Operator function in AI marketing?

The Operator function is the strategic orchestration that connects atomic jobs into coherent workflows. It’s the architecture role that determines how AI agents communicate, what they’re allowed to do, and how their outputs connect to business outcomes.

What are the L1 to L5 levels of AI marketing maturity?

L1 is Prompt Assistant (single prompts, human reviews all output). L2 is Workflow Automation (chained prompts with logic). L3 is Supervised Autonomy (AI executes, human approves). L4 is Guided Autonomy (AI acts within guardrails). L5 is Goal-Based Orchestration (AI determines strategy from objectives).

What is agentic AI in marketing?

Agentic AI refers to autonomous systems that don’t just respond to prompts. They make decisions, trigger actions, and learn across cycles without requiring explicit instructions for each step. This represents the evolution from prompting techniques to built-in reasoning capabilities.